Getting Started

with MAF Agents

1.5

Build production-grade AI agents in .NET — from zero to running in minutes.

What we'll cover

What is an AI Agent?

The concept: LLM, Tools, Reasoning and Context — how they work together to create autonomous behaviour.

Introducing MAF

What Microsoft Agent Framework is, where it comes from, and why it matters for .NET developers.

The 3 Layers of a MAF Agent

OpenAI Client → IChatClient → AIAgent. How the abstraction stack fits together.

Live Demo + Aspire Observability

Build your first agent from scratch and watch it run — with full GenAI traces in .NET Aspire.

What is an AI Agent?

🧠 LLM — The Brain

A large language model (GPT-4o, Claude, Gemini…) that understands language, reasons over input, and decides what to do next.

🔧 Tools — The Hands

Functions the agent can call: search the web, query a database, call an API. The LLM decides when and how to use them.

📚 Context — The Memory

Conversation history, session state, user profile. Gives the agent awareness of what happened before this turn.

An agent is not just a chatbot —

it thinks, decides, and acts.

What is MAF?

The next generation of Semantic Kernel & AutoGen

Built by the same Microsoft teams. Combines AutoGen's simple agent abstractions with Semantic Kernel's enterprise features — session state, middleware, telemetry, and type safety.

🌐 Multi-provider support

Azure OpenAI · OpenAI · Anthropic · Gemini · GitHub Copilot · Ollama · Bedrock — switch with one line of code.

🤝 Agents

Individual agents that use LLMs, call tools and MCP servers, and generate responses. Simple API, powerful runtime.

🕸️ Workflows

Graph-based multi-agent orchestration: sequential, concurrent, group chat, handoff, and Magentic patterns.

📡 Interoperability

A2A (Agent-to-Agent), AG-UI protocol, MCP servers, Azure Foundry hosting — works with the whole ecosystem.

The 3 Layers of a MAF Agent

Layer 1 — OpenAI Client

The raw connection to your LLM provider. Handles authentication, endpoint routing, and HTTP. Swap providers without changing anything above.

Layer 2 — IChatClient

The standard AI abstraction from Microsoft.Extensions.AI. Provider-agnostic interface — swap models without changing agent code above.

Layer 3 — AIAgent

The MAF agent: adds tools, context, session state, instructions, and multi-turn conversation on top of IChatClient.

💡 Each layer adds capabilities — but you only need the ones you use. Start with just OpenAI Client + AIAgent and add layers as you grow.

Visibility into your agent — two lines

📋 .UseLogging(loggerFactory)

Plugs into the standard Microsoft.Extensions.Logging pipeline. Every agent action — LLM calls, tool invocations, errors — appears as structured log entries. Works with any log sink: console, Seq, Application Insights.

📡 .UseTelemetry()

Emits OpenTelemetry spans following the GenAI semantic conventions. Captures model name, token counts, prompt content, tool calls, and latency — all as structured trace data.

💡 Add both to your AIAgentBuilder chain — they're composable middleware. Zero config required beyond a LoggerFactory.

Model, tokens used, latency, prompt + completion content

Function name, input args, result, duration — as child spans

Prompt tokens, completion tokens, per call and aggregated

Failed tool calls and LLM errors appear as error spans with full context

From zero to running in 15 lines

// Layer 1 — Connect to your LLM provider var openAiClient = new OpenAIClient( new ApiKeyCredential(Environment.GetEnvironmentVariable("OPENAI_API_KEY")!)); // Layer 2 — Wrap as IChatClient (Microsoft.Extensions.AI) IChatClient chatClient = openAiClient .AsChatClient(modelId: "gpt-4o-mini"); // Layer 3 — Build your AIAgent with observability AIAgent agent = new AIAgentBuilder() .WithInstructions("You are a helpful assistant.") .UseChatClient(chatClient) .UseLogging(loggerFactory) // → structured logs in Aspire .UseTelemetry() // → OpenTelemetry GenAI traces .Build(); // Run it! string answer = await agent.RunAsync("What is MAF 1.5?"); Console.WriteLine(answer);

📦 NuGet packages

🔌 Layer 1

Raw provider connection. Swap to AzureOpenAIClient, Anthropic or Gemini without touching anything else.

🔁 Layer 2

IChatClient is the standard .NET abstraction — works with any AI extension in the ecosystem.

📊 Observability

Two lines give you full GenAI trace data in .NET Aspire — token counts, latency, tool calls.

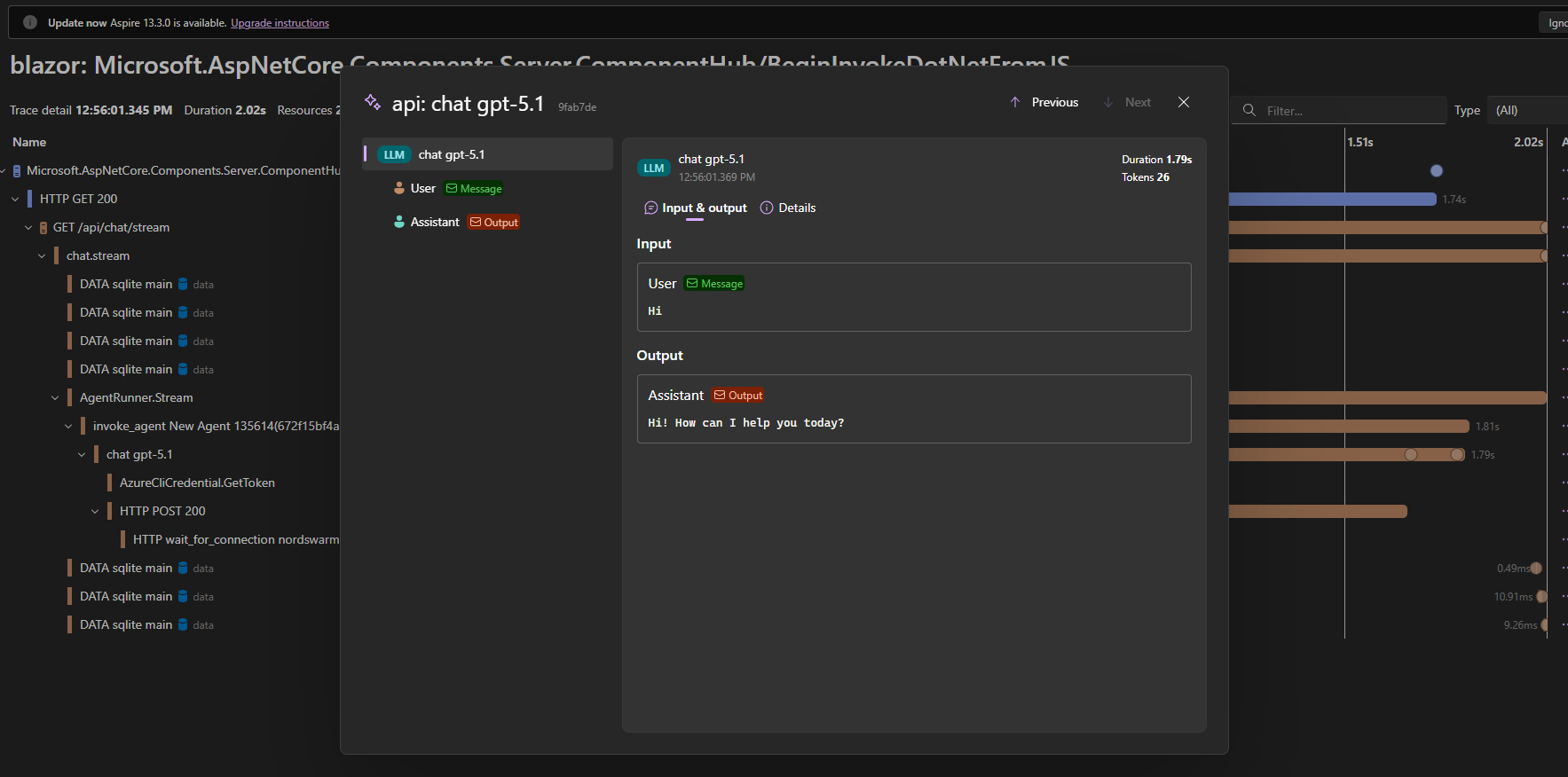

See exactly what your agent does

💬 Prompt & response inzichtelijk

Aspire toont de exacte user input en assistant output per LLM call — geen zwarte doos meer. Ideaal voor debugging en prompt tuning.

⏱️ Duration & token count

Elke LLM span toont duration (bijv. 1.79s) en token gebruik (bijv. 26 tokens) — direct zichtbaar zonder extra logging code.

🌳 Volledige trace hiërarchie

Van HTTP request → AgentRunner → invoke_agent → chat gpt-5.1: zie precies waar in de call stack de LLM wordt aangeroepen.

⚡ Gewoon .UseTelemetry()

Één regel in je AIAgentBuilder. Aspire pikt het automatisch op via OpenTelemetry — zero extra configuratie.

Let's build it live

Building a MAF 1.5 agent from scratch — in the IDE, step by step.

Start building today

MAF 1.5 is production-ready, open source, and designed for .NET developers. Three layers, fifteen lines of code, and you have a running AI agent with full observability.